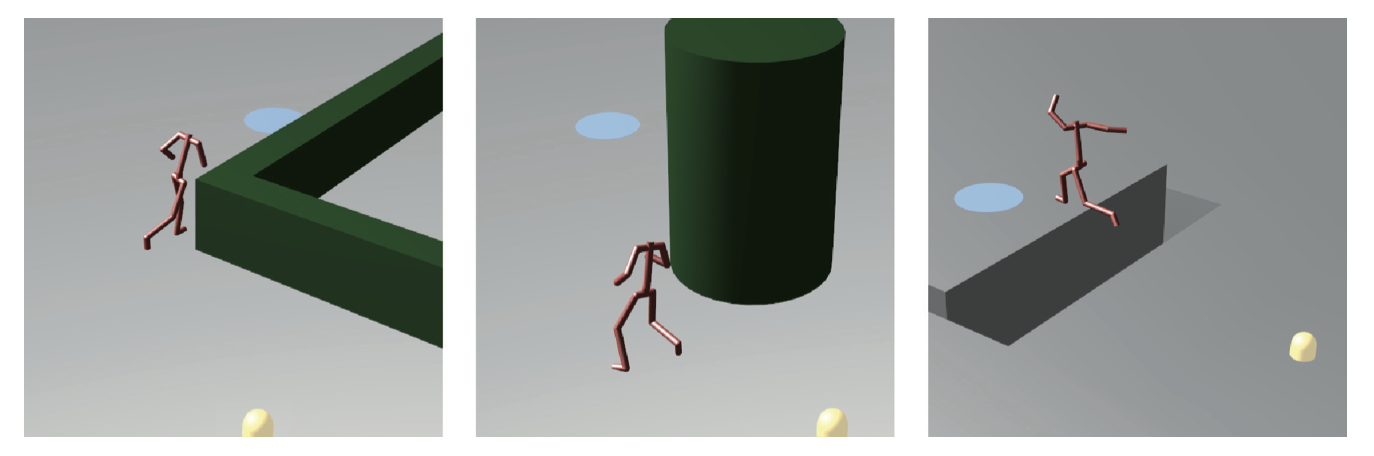

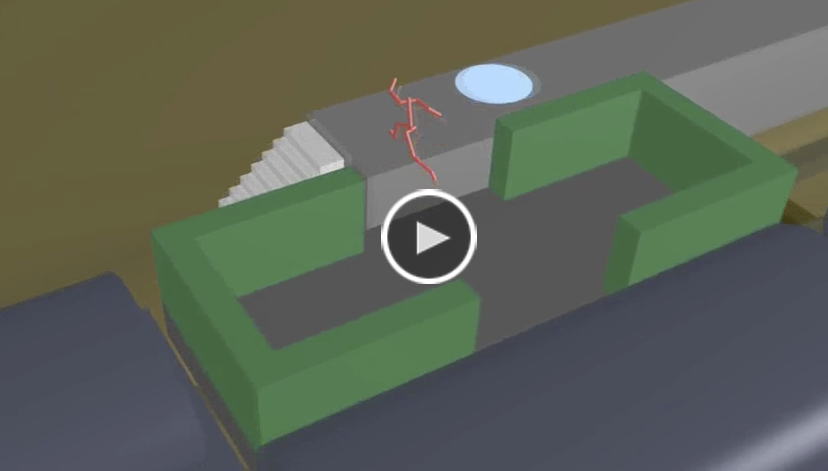

We present a technique for efficiently synthesizing animations for characters traversing complex dynamic environments. Our method uses parameterized locomotion controllers that correspond to specific motion skills, such as jumping or obstacle avoidance. The controllers are created from motion capture data with reinforcement learning. A space-time planner determines the sequence in which controllers must be executed to reach a goal location, and admits a variety of cost functions to produce paths that exhibit different behaviors. By planning in space and time, the planner can discover paths through dynamically changing environments, even if no path exists in any static snapshot. By using parameterized controllers able to handle navigational tasks, the planner can operate efficiently at a high level, leading to interactive replanning rates.