Publications

Matthias Müller, Samarth Brahmbhatt, Ankur Deka, Quentin Leboutet, David Hafner, and Vladlen Koltun

International Conference on Robotics and Automation (ICRA), 2024

Yunlong Song, Angel Romero, Matthias Müller, Vladlen Koltun, and Davide Scaramuzza

Science Robotics, 8(82), 2023

Elia Kaufmann, Leonard Bauersfeld, Antonio Loquercio, Matthias Müller, Vladlen Koltun, and Davide Scaramuzza

Nature, 620, 2023

Takahiro Miki, Joonho Lee, Jemin Hwangbo, Lorenz Wellhausen, Vladlen Koltun, and Marco Hutter

Science Robotics, 7(62), 2022

Antonio Loquercio, Elia Kaufmann, René Ranftl, Matthias Müller, Vladlen Koltun, and Davide Scaramuzza

Science Robotics, 6(59), 2021

Matthias Müller and Vladlen Koltun

International Conference on Robotics and Automation (ICRA), 2021

Joonho Lee, Jemin Hwangbo, Lorenz Wellhausen, Vladlen Koltun, and Marco Hutter

Science Robotics, 5(47), 2020

Elia Kaufmann, Antonio Loquercio, René Ranftl, Matthias Müller, Vladlen Koltun, and Davide Scaramuzza

Robotics: Science and Systems (RSS), 2020 (Nominated for the Best Paper Award)

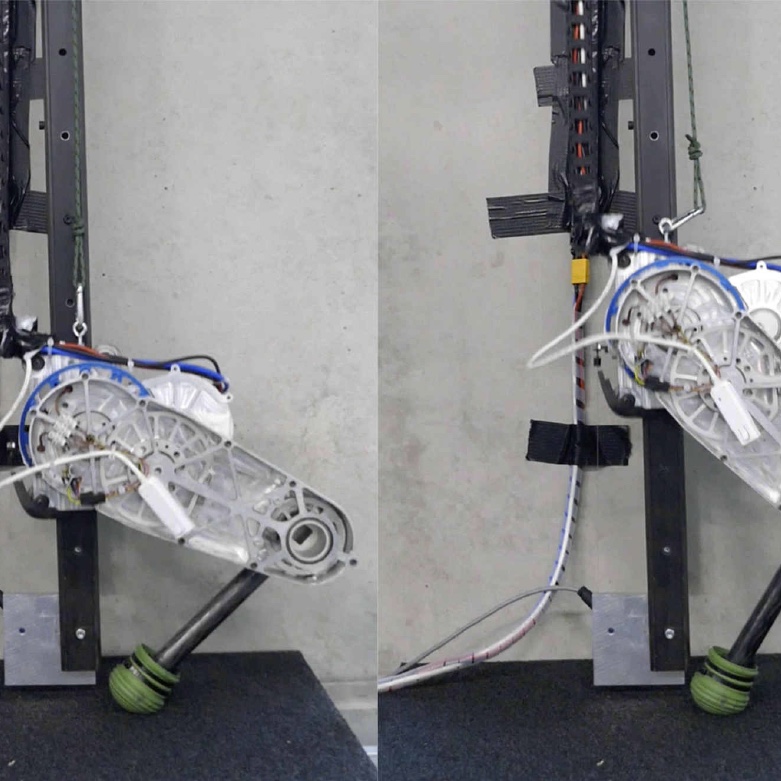

Jan Carius, René Ranftl, Vladlen Koltun, and Marco Hutter

IEEE Robotics and Automation Letters, 4(3), 2019

Brady Zhou, Philipp Krähenbühl, and Vladlen Koltun

Science Robotics, 4(30), 2019

Elia Kaufmann, Mathias Gehrig, Philipp Foehn, René Ranftl, Alexey Dosovitskiy, Vladlen Koltun, and Davide Scaramuzza

International Conference on Robotics and Automation (ICRA), 2019

Jemin Hwangbo, Joonho Lee, Alexey Dosovitskiy, Dario Bellicoso, Vassilios Tsounis, Vladlen Koltun, and Marco Hutter

Science Robotics, 4(26), 2019

Elia Kaufmann, Antonio Loquercio, René Ranftl, Alexey Dosovitskiy, Vladlen Koltun, and Davide Scaramuzza

Conference on Robot Learning (CoRL), 2018 (Best Systems Paper Award)

Matthias Müller, Alexey Dosovitskiy, Bernard Ghanem, and Vladlen Koltun

Conference on Robot Learning (CoRL), 2018

Jan Carius, René Ranftl, Vladlen Koltun, and Marco Hutter

IEEE Robotics and Automation Letters, 3(4), 2018

Felipe Codevilla, Matthias Müller, Antonio López, Vladlen Koltun, and Alexey Dosovitskiy

International Conference on Robotics and Automation (ICRA), 2018