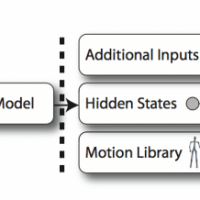

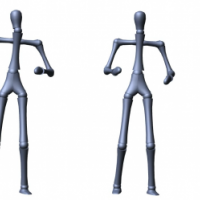

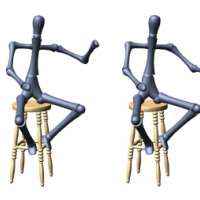

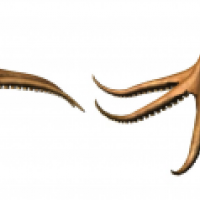

We introduce gesture controllers, a method for animating the body language of avatars engaged in live spoken conversation. A gesture controller is an optimal-policy controller that schedules gesture animations in real time based on acoustic features in the user’s speech. The controller consists of an inference layer, which infers a distribution over a set of hidden states from the speech signal, and a control layer, which selects the optimal motion based on the inferred state distribution. The inference layer, consisting of a specialized conditional random field, learns the hidden structure in body language style and associates it with acoustic features in speech. The control layer uses reinforcement learning to construct an optimal policy for selecting motion clips from a distribution over the learned hidden states. The modularity of the proposed method allows customization of a character’s gesture repertoire, animation of non-human characters, and the use of additional inputs such as speech recognition or direct user control.